- Charmed Labs Pixy2 Robot Vision Image Sensor

- Smaller and faster (60 frames per second) than the previous Pixy1

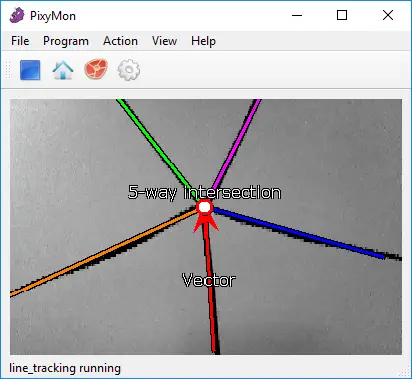

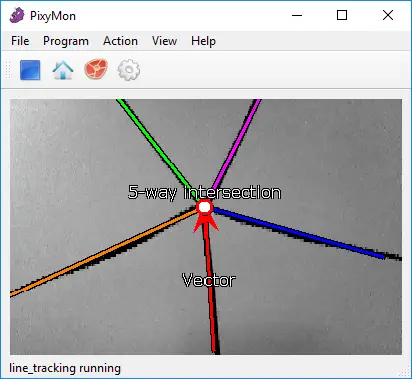

- New “line following” mode with custom algorithms for detecting and tracking lines

- Improved PixyMon software (PC/Mac/Linux application)

The Charmed Labs Pixy 2.1 Robot Vision Image Sensor features a smaller, faster and more capable than the original Pixy.

Pixy 2 can learn to detect objects that you teach it, just by pressing a button. Additionally, it has new algorithms that detect and track lines for use with line-following robots. The new algorithms can detect intersections and “road signs” as well. The road signs can tell your robot what to do, such as turn left, turn right, slow down, etc.

And Pixy2 does all of this at 60 frames-per-second, so your robot can be fast, too. Pixy2 comes with a special cable to plug directly into an Arduino and a USB cable to plug into a Raspberry Pi, so you can get started quickly. No Arduino or Raspberry Pi? No problem! Pixy2 has several interfaces (SPI, I2C, UART, and USB) and simple communications, so you get your chosen controller talking to Pixy2 in short order.

Wider field-of-view

The new version 2.1 has an 80 degree field-of-view and offer a larger field of view. There is some spherical distortion with the new lens, which comes with the wider field-of-view.

Replaceable lens and Adjustable focus

The M12 lens mount allows you to substitute a different lens and has an adjustable focus that allows focusing on objects at practically any distance, including as close as 0.25 inch.

Less chromatic distortion and Less pixel noise

The new lens has virtually no chromatic distortion at the edges, whereas the previous Pixy version had measurable chromatic distortion.

Also, the new lens has an F-stop of 2.0 vs the previous lens which had an F-stop of over 3.0. This means better light gathering ability, more signal and less noise for a given amount of ambient light. Less noise means better detection accuracy for this new Pixy version.

If you want your robot to perform a task such as picking up an object, chasing a ball, locating a charging station, etc., and you want a single sensor to help accomplish all of these tasks, then vision is your sensor. Vision (image) sensors are useful because they are so flexible. With the right algorithm, an image sensor can sense or detect practically anything.

But there are two drawbacks with image sensors: 1) they output lots of data, dozens of megabytes per second, and 2) processing this amount of data can overwhelm many processors. And if the processor can keep up with the data, much of its processing power won’t be available for other tasks.

Pixy2 addresses these problems by pairing a powerful dedicated processor with the image sensor. Pixy2 processes images from the image sensor and only sends the useful information (e.g. purple dinosaur detected at x=54, y=103) to your microcontroller. And it does this at frame rate (60 Hz). The information is available through one of several interfaces: UART serial, SPI, I2C, USB, or digital/analog output. So your Arduino or other microcontrollers can talk easily with Pixy2 and still have plenty of CPU available for other tasks.

60 frames per second

What does “60 frames per second” mean? In short, it means Pixy2 is fast. Pixy2 processes an entire image frame every 1/60th of a second (16.7 milliseconds). This means that you get a complete update of all detected objects’ positions every 16.7 ms.

At this rate, tracking the path of falling/bouncing ball is possible. (A ball traveling at 40 mph moves less than a foot in 16.7 ms.) If your robot is performing line following, your robot will typically move a small fraction of an inch between frames.

Purple dinosaurs (and other things)

Pixy2 uses a color-based filtering algorithm to detect objects called the Color Connected Components (CCC) algorithm. Color-based filtering methods are popular because they are fast, efficient, and relatively robust. Most of us are familiar with RGB (red, green, and blue) to represent colors. Pixy2 calculates the color (hue) and saturation of each RGB pixel from the image sensor and uses these as the primary filtering parameters.

The hue of an object remains largely unchanged with changes in lighting and exposure. Changes in lighting and exposure can have a frustrating effect on color filtering algorithms, causing them to break. Pixy2’s filtering algorithm is robust when it comes to lighting and exposure changes.

Seven color signatures

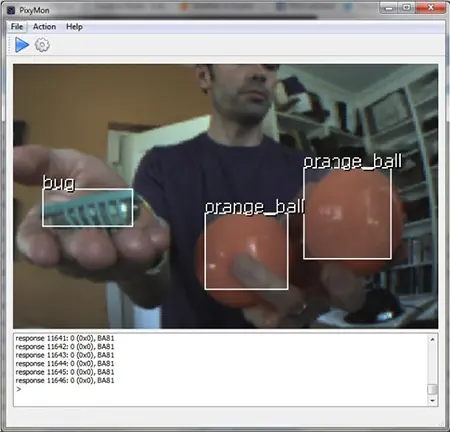

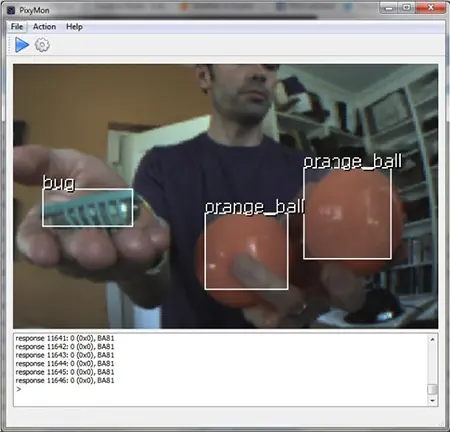

Pixy2's CCC algorithm remembers up to 7 different color signatures, which means that if you have 7 different objects with unique colors, Pixy2’s color filtering algorithm will have no problem identifying them. If you need more than seven, you can use color codes.

Teach it the objects you’re interested in

Pixy2 is unique because you can physically teach it what you are interested in sensing. Purple dinosaur? Place the dinosaur in front of Pixy2 and press the button. Orange ball? Place the ball in front of Pixy2 and press the button. It’s easy, and it’s fast.

More specifically, you teach Pixy2 by holding the object in front of its lens while holding down the button located on top. While doing this, the RGB LED under the lens provides feedback regarding which object it is looking at directly. For example, the LED turns orange when an orange ball is placed directly in front of Pixy2. Release the button and Pixy2 generates a statistical model of the colors contained in the object and stores them in flash. It will then use this statistical model to find objects with similar color signatures in its frame from then on.

Pixy2 can learn seven color signatures, numbered 1-7. Color signature 1 is the default signature. To teach Pixy2 the other signatures (2-7) requires a simple button pressing sequence.

PixyMon lets you see what Pixy sees

PixyMon is an application that runs on Windows, macOS, and Linux. It allows you to see what Pixy sees, either as raw or processed video. It also allows you to configure your Pixy, set the output port and manage color signatures. PixyMon communicates with Pixy over a standard mini USB cable. PixyMon is great for debugging your application.

Controller support

Pixy can easily connect to lots of different controllers because it supports several interface options (UART serial, SPI, I2C, USB, or digital/analog output), but Pixy began its life talking to Arduinos. Support for Arduino Due, Raspberry Pi and BeagleBone Black have been added.